How We Build the Metaverse

GT Systems CTO Rhett Sampson looks at what’s needed to deliver the metaverse and how much of it is already available.

There is a lot of fuss about the metaverse at the moment. The good news is that the pieces we need to build it have finally arrived. So, the fuss is probably justified this time around. Everyone is asking: “What is the Metaverse? What are the pieces we need to build it? And how do we do that?”

It doesn’t take long to work out that, if we are going to join up a diverse set of existing sub-universes, such as Fortnight, Minecraft, World of Warcraft, Roblox, Decentraland, even Facebook (oops Meta) into the Metaverse, then they will all need to “talk” to each other. Talk how? They need to be able to exchange assets, characters (avatars), reputation, skills, experience, tools, weapons, clothing, potions, loot; and in the case of business worlds such as architecture, they need to be able to exchange drawings and models that interwork and follow exactly the same set of rules and dimensions. In real time. Most importantly, we need to be able to buy and pay for stuff in all the different stores in all the different sub-universes as we move between them. Blockchain and crypto currencies are an obvious candidate for that.

Standards

First and foremost, we need a set of standards. The Roman road builders and the American rail tycoons worked this out a long time ago. So, who are the road and rail builders of the Metaverse? The first is Pixar. They’ve been building CGI (computer generated imaging) worlds as long as anyone. They realised ten years ago that they needed a set of standards and invented Universal Scene Description (USD).

Image Credit: NVIDIA

“Universal Scene Description (USD)… the first publicly available software that addresses the need to robustly and scalably interchange and augment arbitrary 3D scenes that may be composed from many elemental assets.”

https://graphics.pixar.com/usd/release/intro.html

Tools

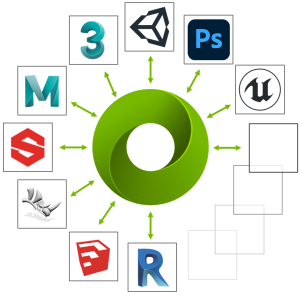

Next, we need a set of tools to do that. One option is Nvidia’s Omniverse (named one of the top 100 inventions of 2021 by Time Magazine):

“Omniverse… a scalable, multi-GPU real-time reference development platform for 3D simulation and design collaboration… based on Pixar’s Universal Scene Description and NVIDIA RTX™ technology.”

https://developer.nvidia.com/nvidia-omniverse-platform

The beauty of this is that it allows the exchange of Metaverse assets (e.g. characters or avatars and their rendered virtual worlds) and changes in those worlds (e.g. a character’s movement) in a highly efficient “metalanguage and metadata” that significantly reduce bandwidth requirements and ensure interoperability. In short, Omniverse is the highly sophisticated and efficient ontology or “language” of the metaverse.

Source of Truth

We also need a “source of truth” for the Metaverse, a repository of everything that makes up the Metaverse and its sub-universes that enables publishers to “share and modify representations of virtual worlds”. We could keep those sources of truth in their respective sub-universes and use USD and Omniverse to exchange information about them. Or we could do what Nvidia have done and have a central repository, Nucleus.

Nucleus solves the N2 interface problem (for 100 sub-universes to exchange data with each other we need 1002 or 10,000 interface connections speaking all their different languages). But it raises questions of scale: a central repository would have to be VERY BIG and VERY FAST. This could be beyond even the current capabilities of Nvidia super-computers, at least in a centralised architecture.

The solution to this would be a network that can store the Metaverse assets and understands Omniverse commands. That network is SPAN-AI™. We speak Metaverse and we optimise content caching and routing in the network. The network is the cloud. We also speak native blockchain and Merkle Tree, in fact any content-based storage and distribution “language”.

SPAN-AI Speaks Metaverse

SPAN-AI is fully distributed, elastic (virtual), content based and intelligent. EVERY node in a SPAN-AI Universal Content Distribution Network (UCDN) is autonomous and self-optimising. Every device on the network, whether it is a server, router, switch, gateway, PC, phone, VR/AR headset, etc. is a compute, storage and routing element of the network. That makes the SPAN-AI network a giant, distributed Metaverse computer, far more powerful than any centralised super-computer and far more efficient because it is as close to consumers as possible.

SPAN-AI fundamentally understands commands such as “publish” and “subscribe” (the core commands of Nucleus) and also understands Omniverse/Metaverse commands to create and share content. In short, SPAN-AI is the “glue” of the Metaverse that brings all the sub-universes together by speaking native Metaverse and caching Metaverse content and assets in the network. Nucleus would be a “super-node” in that network, as would every other world.

Hollywood Already Gets It

Hollywood has been grappling with these production issues for decades. Many modern movies are a mix of live actors and scenes and “green screened” computer generated characters and scenes. Motion Picture Laboratories (Hollywood’s super techies) have published a vision for a future production platform that has many features in common with the Metaverse described above. It lays out a path for The Evolution of Media Creation:

“…to enable seismic changes in media workflows with one objective in mind – to empower storytellers to tell more amazing stories while delivering at a speed and efficiency not possible today.”

We Can Do This Now

We can do all this now. We just need to “join up the pieces” and build it. The good news is that GT Systems is working with pioneers to build a global, next gen network to do exactly this on earth and in space. We have the foundations of a set of standards. We can extend them to cover this vision. This blog is a call to the movie, gaming, blockchain and metaverse industries to do exactly that: come together to define the standards, platforms and network on which to actually build the Metaverse; and then to build it.